Sep 29, 2017 · newsletter

Futurism and Artificial Intelligence

For some, Mayweather-McGregor was the prizefight of the summer. For others, it has been Musk-Zuckerberg going toe-to-toe over the risks posed by AI, with Musk voicing his reservations about artificial intelligence while Zuck remains more sanguine. Musk has called AI possibly the “biggest threat” to humanity and gone so far as to suggest the decidedly un-Catholic opinion that Silicon Valley should be welcoming regulatory oversight of AI in this one exceptional instance. Some have responded to Musk’s statements by accusing him of having watched Terminator a few times too many while others have taken his statements as license to trumpet their own dire warnings about the threat of AI or report gleefully over the blow-by-blow.

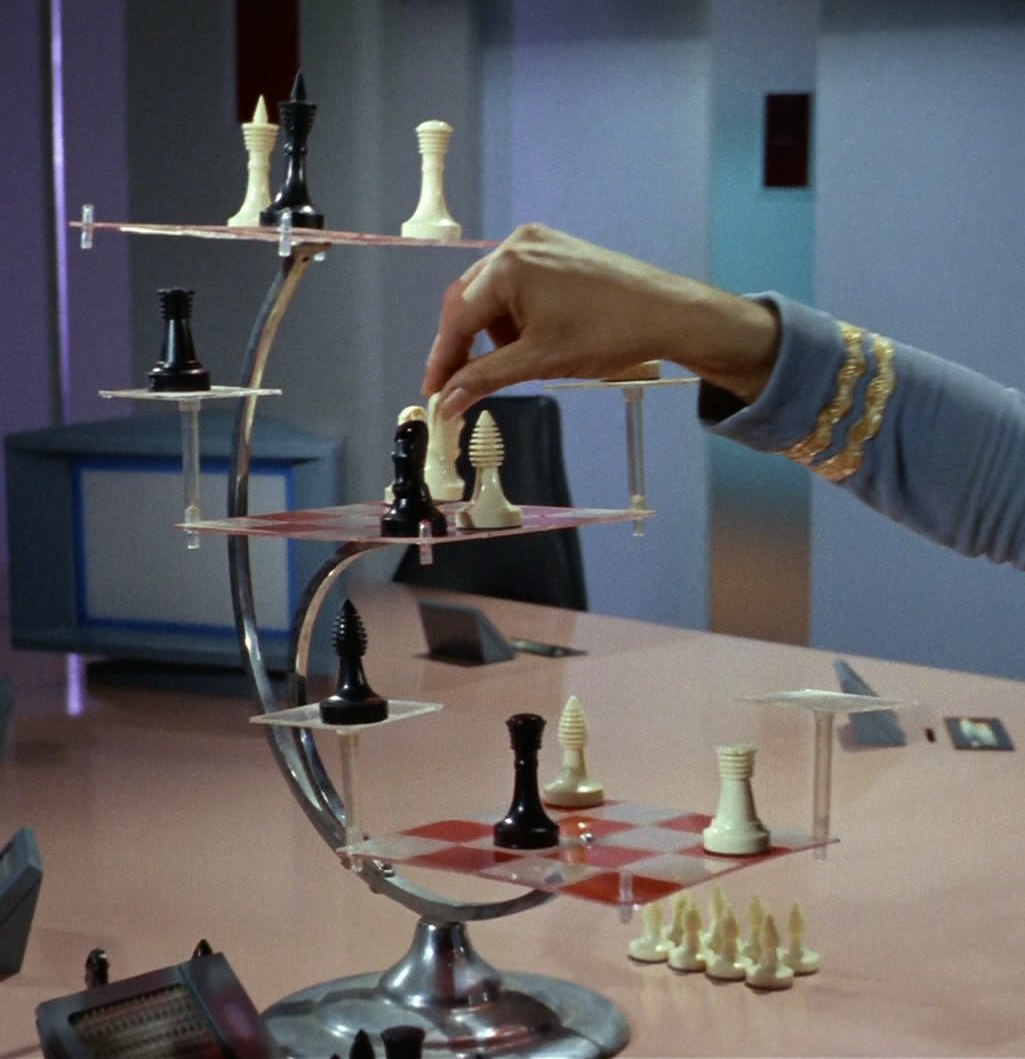

Unsurprisingly, many of those who have chimed in about the debate have turned to sci-fi for guidance in thinking through the dangers of AI. Rodney Brooks cites Arthur C. Clarke’s Adages of Futurism while Oren Etzioni name-checks Asimov’s Laws of Robotics before suggesting his own Laws of AI. These are useful, and insightful, approaches to futurism in general, and there are good reasons why we still talk about Clarke and Asimov - their insights remain evergreen. We are hesitant, however, to see warnings about AI, particularly Musk’s, as solely prescient warnings about the dangers he perceives. We are also a bit hesitant to describe anyone as playing 11-dimensional chess, but there may be more than meets the eye to Musk’s warnings. Consider three possible ulterior motives for sounding the alarm on AI as Musk has:

One - it’s great marketing. Marketing on fear, uncertainty, and doubt (FUD) is not new, and deploying these emotions around AI is a great way to drive consumers away from competitors, or at the very least towards your own AI research (…cough… OpenAI).

Two - it sets a seat at the table. By calling for regulation of AI now, Musk is positioning himself as someone who has a healthy skepticism of AI, and who can therefore be trusted when he declares regulations to be effective safeguards against the risks he perceives. What better way to be able to shape regulations that go exactly as far as he might want (and no further)?

Three - it’s great recruiting. Competition for talented AI researchers and engineers is fierce. This is known. But by calling out the risks of AI as forcefully as he has, Musk may be making a subtle appeal to top-notch researchers who may be on the fence about joining the ranks of larger engineering teams, or vacillating about leaving academia, by signaling the opportunity to work on solving (what seem like) existential challenges for humanity. Not a bad recruiting poster.

So what can we take away from thinking about these possible ulterior motives for the recent spate of dire (if premature and exaggerated) warnings about the risks of AI? One takeaway is a recognition that there are real risks to humans from ML and AI. Building systems that are interpretable, that consider aspects of fairness and accountability, and that recognize the potential for harm that today’s algorithms possess is not just responsible - it’s good design. Building trustworthy systems is also good recruiting and good marketing. Another takeaway is that we should all feel empowered to articulate our own “Laws of Robotics,” “Adages of Futurism,” or “Laws of AI.” This may sound like another principle of sound design (and it is), but it is also a way of thinking through potential risks, as well as opportunities for countering those risks, in ways that are specific to the context of the product being built, or the problem being solved.